Understanding Probability Distributions in Actuarial Science

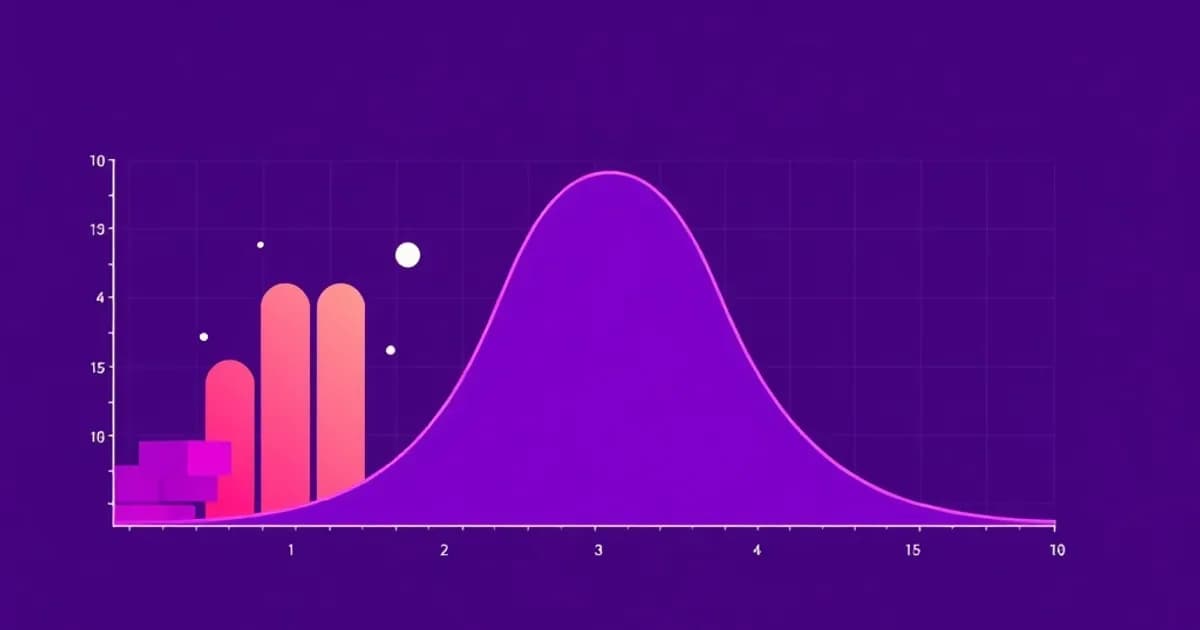

Probability distributions form the mathematical foundation of actuarial work. In loss distribution modeling, you select a mathematical function that best represents how insurance claims occur in reality.

Real-World Loss Patterns

Real-world losses don't follow uniform patterns. Most claims cluster around certain values while occasionally producing extreme claims. This is why selecting the right distribution matters for accurate pricing and reserving.

Common distributions include:

- Normal distribution: Symmetric and useful for general purposes

- Lognormal distribution: Handles right-skewed data common in insurance claims

- Exponential distribution: Models the time between claims

- Pareto distribution: Captures heavy-tailed behavior and extreme losses

Understanding Distribution Functions

Focus on probability density functions (PDFs) and cumulative distribution functions (CDFs), as these define how likely different loss values are. Understanding each distribution's parameters (mean, variance, shape parameters) helps you interpret real data and predict future claims.

The Pareto distribution is particularly important because it accounts for the small number of very large losses that disproportionately impact insurers.

Fitting Distributions to Empirical Loss Data

Once you understand theoretical distribution properties, the next critical skill is fitting them to actual loss data. This involves using statistical methods to determine which distribution best matches your observed claims.

Parameter Estimation Methods

Maximum likelihood estimation (MLE) is the most common approach. You find parameter values that make your observed data most probable under the chosen distribution.

The method of moments offers an alternative. You match the theoretical moments (mean, variance) of a distribution to the sample moments from your data.

Testing Goodness-of-Fit

Assess whether your chosen distribution actually fits well using:

- Kolmogorov-Smirnov test

- Anderson-Darling test

- Akaike Information Criterion (AIC)

- Bayesian Information Criterion (BIC)

Actuaries often test multiple distributions and compare their fit quality. This comparison approach ensures you select the best-fitting model.

Handling Real-World Data Complications

A crucial consideration is handling censored and truncated data, which is common in insurance. Policies have deductibles (truncation) and coverage limits (censoring). Understanding how to adjust your fitting procedures for these complications separates competent actuaries from novices. Practice with sample datasets using statistical software like R or Python.

Key Distributions and Their Applications in Insurance

Different insurance products and claim types require different distribution models. Selecting the right one depends on your data characteristics.

Common Insurance Distributions

The exponential distribution is useful for modeling time between claims or simple claim severity data. It has one parameter, lambda, and exhibits the memoryless property.

The gamma distribution, controlled by shape parameter alpha and scale parameter beta, is flexible and handles various claim patterns. When alpha equals one, it becomes exponential. When alpha is large, it approaches normality.

The lognormal distribution is exceptionally valuable for property and casualty insurance. It naturally models right-skewed claims with occasional large losses. Taking the natural logarithm of lognormal losses produces a normal distribution.

The Pareto distribution is critical for modeling losses above a threshold. It represents Pareto's law: a small percentage of claims represent the majority of loss dollars.

Selecting Your Distribution

The beta distribution models proportions and probabilities bounded between zero and one. The Weibull distribution offers flexibility similar to gamma but with different mathematical properties.

When selecting a distribution for your problem, consider:

- Does the data have extreme values?

- Is it symmetric or skewed?

- Does it have a natural lower or upper bound?

Your answers guide which distribution family to explore first.

Parameter Estimation and Practical Calculations

Parameter estimation transforms a theoretical distribution into a practical tool for your specific claims dataset. This step bridges theory and application.

Maximum Likelihood Estimation

Maximum likelihood estimation is the gold standard because it has excellent statistical properties and handles censoring elegantly. For the exponential distribution with parameter lambda, the MLE is simply one divided by the sample mean.

For the normal distribution, the MLEs are the sample mean and sample variance. For complex distributions like lognormal or Pareto, you solve equations iteratively using numerical methods.

Method of Moments

The method of moments provides a faster, simpler alternative. You equate sample moments to theoretical moments and solve for parameters. With the gamma distribution, the sample mean equals alpha times beta, and sample variance equals alpha times beta squared. Solving these simultaneously gives you your parameter estimates.

Understanding Limitations and Uncertainty

Maximum likelihood estimates can be unstable with small sample sizes. Moment estimates may perform poorly with heavy-tailed distributions. Practical actuaries often employ both methods and compare results.

You must calculate confidence intervals around your estimates using the delta method or bootstrap resampling to quantify your uncertainty. Tail estimation is a specialized challenge. The largest claims are sparse but crucial for actuarial decision-making, making robust estimation of tail parameters essential for pricing and reserving.

Practical Application: From Theory to Insurance Pricing

The ultimate purpose of mastering loss distributions is applying them to real insurance problems. This demonstrates why distribution modeling directly impacts company profitability and solvency.

Working Through a Property Insurance Example

Consider a property insurance scenario with claims data from the past five years, including small damage claims and several catastrophic events. Your first step is exploratory data analysis, creating histograms and Q-Q plots to visualize the data.

Identify which distributions might fit. Test lognormal and Pareto distributions as primary candidates. Using maximum likelihood estimation, fit each distribution and conduct goodness-of-fit tests.

Suppose lognormal fits best with parameters mu equals 9.2 and sigma equals 1.8. You can now calculate the probability of claims exceeding specific thresholds, crucial for setting pricing.

Incorporating Policy Limits and Deductibles

If your policy has a deductible of 50,000 dollars and coverage limit of 500,000 dollars, calculate the expected claim amount conditional on these limits. This adjusted calculation reflects what the insurer actually pays.

Calculating Pure Premium and Setting Rates

For pricing, apply the pure premium formula: expected annual claims frequency times expected claim severity equals pure premium. Then add loadings for expenses, profit margin, and uncertainty to arrive at the actual premium charged.

Tail value at risk (TVaR) calculations using your fitted distribution help you understand extreme scenarios and set aside adequate reserves. Sensitivity analysis shows how premium changes if your distribution assumptions shift, building confidence in your pricing model.