Understanding Containers and ECS Fundamentals

Containers are lightweight, portable units that bundle an application with all dependencies, libraries, and configuration files needed to run. Unlike virtual machines that virtualize hardware, containers virtualize at the operating system level, making them faster and more efficient.

What Are Docker Images and Containers?

Docker is the most popular containerization platform. Docker uses images (blueprints) to create containers (running instances). Images contain the application code and all dependencies in layers. When you run an image, Docker creates a container instance.

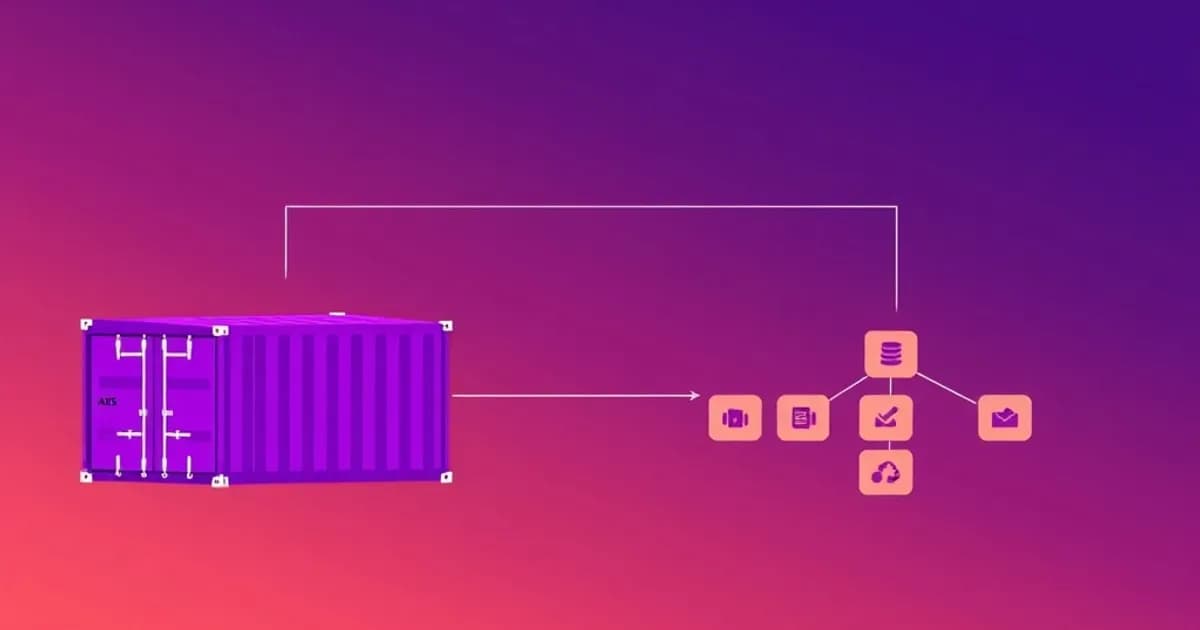

How AWS ECS Orchestrates Containers

AWS ECS is Amazon's native container orchestration service. It manages Docker containers across a cluster of EC2 instances or serverless infrastructure. ECS simplifies deployment by handling scheduling, load balancing, scaling, and monitoring automatically.

Core ECS Components

The core components interact together:

- Clusters: Logical groupings of resources where containers run

- Task definitions: Templates specifying container configuration, CPU, memory, environment variables, and logging

- Tasks: Running instances of task definitions

- Services: Long-running tasks that maintain a desired count and enable load balancing

AWS Solutions Architect questions frequently test your ability to design container solutions meeting requirements like high availability and cost optimization. Focus on how each component interacts and relates to others. Create flashcards pairing ECS components with their functions and use cases.

ECS Launch Types: EC2 vs Fargate

ECS offers two launch types that fundamentally change how you manage infrastructure. Each has distinct operational and cost characteristics.

EC2 Launch Type: Maximum Control

The EC2 launch type requires you to provision and manage EC2 instances forming your ECS cluster. You handle instance lifecycle management, OS patching, capacity planning, and cost optimization.

This option works best for long-running workloads or consistent resource utilization. You can optimize costs through reserved instances. The EC2 launch type gives you maximum control over your infrastructure.

Fargate: Serverless Containers

Fargate is serverless, meaning you provision containers without managing infrastructure. AWS handles server provisioning, patching, and scaling automatically. You pay only for the vCPU and memory your containers use.

Fargate excels for variable or bursty workloads. It eliminates operational overhead but typically costs more per compute unit than EC2.

Key Trade-offs to Understand

- EC2: Better economics for steady workloads, allows customization, requires more management

- Fargate: Simplicity and automatic scaling, ideal for microservices, higher per-unit cost

For the Solutions Architect exam, you need to quickly identify which launch type suits different scenarios. Study the cost models, operational requirements, and scaling characteristics. Create flashcards comparing these side-by-side with specific workload examples.

Task Definitions, Services, and Load Balancing

Task definitions are JSON templates describing how Docker images run on ECS. They specify the Docker image URI, container name, CPU and memory allocations, environment variables, port mappings, logging configuration, and IAM role permissions.

Configuring Task Definition Resources

Understanding task definition parameters is essential because exam questions test your ability to configure proper resource limits and logging. Two critical parameters are:

- Reservations: Guarantee minimum resources but increase costs

- Limits: Cap maximum consumption but may throttle legitimate spikes

Both work together to balance performance and cost. Set reservations for baseline needs and limits to prevent runaway resource consumption.

Services and Load Balancing

Services manage long-running applications by maintaining a desired number of tasks. If a task fails, the service automatically launches a replacement, providing high availability. Services integrate seamlessly with Elastic Load Balancing.

Choose your load balancer based on traffic type:

- Application Load Balancer (ALB): Best for HTTP/HTTPS traffic with path-based or hostname-based routing

- Network Load Balancer (NLB): Best for extreme performance with ultra-high throughput

Deployment Configuration

When designing ECS solutions, understand these service parameters:

- Desired count: Number of running tasks

- Deployment configuration: How many tasks to update during rolling deployments

- Placement strategies: How to distribute tasks across cluster instances

Task definitions are static templates, while services are dynamic orchestration layers. Create flashcards explaining each parameter's purpose and test scenarios requiring value adjustments.

Scaling, Monitoring, and Auto-Scaling Strategies

ECS auto-scaling operates at two levels: cluster-level (adding or removing EC2 instances) and service-level (adjusting task counts). Understanding both is critical for production designs.

Service-Level Scaling

For EC2 launch type, use Application Auto Scaling to scale services based on CloudWatch metrics like CPU utilization, memory utilization, or custom metrics. Define scaling policies using:

- Target tracking: Automatically scale to maintain a target metric value

- Step scaling: Scale in discrete increments based on metric thresholds

For Fargate, auto-scaling is simpler since AWS manages infrastructure automatically. You only control task count scaling.

Cluster Capacity Considerations

For EC2 launch type, insufficient cluster capacity causes service scaling to fail silently. Tasks stay pending indefinitely even with auto-scaling policies configured.

Capacity Providers solve this problem by automatically provisioning EC2 instances when cluster capacity is insufficient. This prevents the common failure scenario where services cannot scale because capacity is exhausted.

Monitoring and Events

Monitoring ECS requires multiple CloudWatch metrics: task count, CPU/memory utilization, service deployment status, and custom application metrics. Container Insights provides enhanced monitoring, collecting metrics at cluster, service, and task levels.

ECS sends events to CloudWatch Events (now EventBridge) for task state changes, service deployment changes, and container instance status changes. These events are essential for troubleshooting and automation.

Focus on how these components work together: your application generates metrics, CloudWatch processes them, scaling policies trigger adjustments, and events notify you of changes. Create flashcards covering scaling policy types, metric options, and troubleshooting scenarios.

Container Networking, Logging, and Security Best Practices

ECS networking depends on your launch type and networking mode. Each mode has distinct characteristics affecting security and service discovery.

Network Modes

awsvpc network mode (required for Fargate) assigns an ENI (Elastic Network Interface) to each task, providing IP addresses within your VPC. This enables fine-grained security group and network ACL controls per task.

Bridge network mode (common for EC2) routes container ports to host EC2 instance ports. This mode requires more careful port management but can improve resource efficiency.

Understanding these modes is critical because they affect port mapping, security architecture, and service discovery.

Logging Best Practices

For production ECS deployments, logging is non-negotiable. ECS integrates with CloudWatch Logs, Splunk, Datadog, and other platforms through log drivers in task definitions.

CloudWatch Logs is the native AWS solution and integrates seamlessly with ECS. Each container can send logs to different log groups and streams. Implement appropriate retention policies (7 days, 30 days, forever) based on compliance and cost.

Security Implementation

Security best practices include:

- Run containers with minimal privileges (no root unless necessary)

- Use private ECR (Elastic Container Registry) repositories

- Implement image scanning for vulnerabilities

- Use IAM roles for task permissions

- Never embed credentials in Docker images or task definitions

- Use Secrets Manager or Parameter Store to inject secrets at runtime

- Implement network isolation through security groups and private subnets

Container image management requires image tagging strategies, automatic cleanup of unused images, and scanning before deployment. Study the complete chain from image creation through production. Create flashcards addressing logging configuration options, security best practices, and troubleshooting scenarios.