AWS Database Migration Service Architecture and Components

Core DMS Components

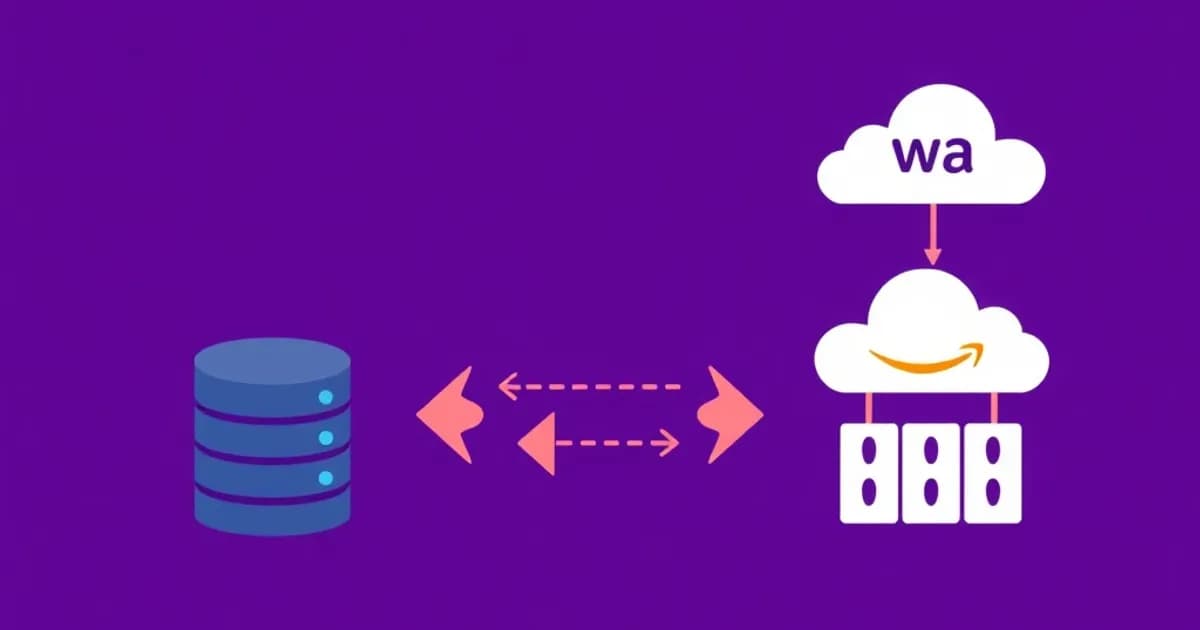

AWS DMS consists of several interconnected components working together for seamless migrations. The replication instance is the core compute engine that handles all migration work. It manages connections to both source and target databases simultaneously and performs the actual data transfer.

Replication instances come in various sizes for different needs. Small instances like dms.t3.micro work for proof-of-concept migrations. Larger instances like dms.c5.9xlarge handle large-scale operations with higher throughput.

Endpoints and Migration Tasks

Source and target endpoints define connection parameters and credentials for your databases. DMS supports heterogeneous migrations between different database engines. For example, you can migrate from Oracle to PostgreSQL or from SQL Server to MySQL.

The migration task is the actual job that moves your data. It includes table mappings, transformation rules, and logging settings. DMS processes data according to your configuration and applies changes to the target database.

Change Data Capture and Synchronization

Change Data Capture (CDC) tracks ongoing database changes after initial data transfer. This ensures the target database stays synchronized with the source. The service supports full load plus CDC for zero-downtime migrations.

Full load plus CDC allows applications to switch to the target database with confidence. Understanding these components helps you design migration strategies and troubleshoot production issues.

Replication Instances and Performance Optimization

Choosing the Right Instance Size

The replication instance determines your migration speed and capacity. Instance selection is a critical architectural decision. Consider database size, network bandwidth, and migration complexity when choosing.

Small instances like dms.t3.micro work for proof-of-concept migrations and lightweight operations. The dms.c5 instances provide better performance for production migrations with large datasets. CPU utilization, memory, and network bandwidth directly affect throughput and migration duration.

High Availability and Failover

Multi-AZ replication instances provide high availability and automatic failover. This is essential for business-critical migrations where any interruption could impact operations. Multi-AZ deployments cost more but add reliability for production workloads.

Performance Tuning Strategies

Optimize migration speed with several key techniques. Increase the ParallelLoadThreads parameter to load multiple tables concurrently. Enable BatchApplyEnabled for better performance during CDC. Optimize network connectivity between the replication instance and your databases.

AWS recommends placing replication instances in the same VPC as your target AWS database. For on-premises source databases, use AWS Direct Connect for optimal performance.

Monitoring and Bottleneck Identification

Monitor CloudWatch metrics like CPU, memory, and network throughput during migrations. These metrics help identify bottlenecks enabling proactive optimization. Check metrics regularly to catch performance issues early.

Source and Target Database Support and Heterogeneous Migrations

Supported Database Engines

AWS DMS supports migrations from numerous database engines. Common source databases include Oracle, SQL Server, MySQL, PostgreSQL, MariaDB, MongoDB, and SAP ASE. AWS supports migrations to RDS, Aurora, DynamoDB, S3, Elasticsearch, and more.

This broad support enables both homogeneous and heterogeneous migrations. Homogeneous migrations use the same database engine for source and target. Heterogeneous migrations move data between different database engines.

Schema Conversion for Heterogeneous Migrations

Heterogeneous migrations require additional consideration. Schema structures, data types, and features may not map directly between engines. The AWS Schema Conversion Tool (SCT) works alongside DMS to transform source schemas into target-compatible formats.

SCT handles database-specific objects like stored procedures, triggers, and packages. For example, migrating from Oracle to PostgreSQL requires converting Oracle packages to PostgreSQL functions. It adjusts PL/SQL syntax to PL/pgSQL.

Migration Assessment and Validation

SCT analyzes source databases and identifies incompatibilities with target engines. It provides assessment reports and suggested remediation strategies.

Homogeneous migrations between the same database engines are simpler and typically faster. Schema structures remain compatible, though you still benefit from DMS's managed approach and CDC capabilities.

Complex Migration Handling

Pre-migration validation and testing are crucial for success. Identify data type conversions, application compatibility issues, and potential performance impacts before cutover. DMS supports parallel processing of multiple tables and custom transformation rules to handle complex business logic during migrations.

Migration Task Configuration, Validation, and Best Practices

Configuring Migration Tasks

Configuring a DMS migration task involves specifying source and target endpoints. Select tables or schemas to migrate and define transformation rules. Table mappings allow selective migration using pattern matching with wildcards.

Selection rules use include and exclude actions to control which objects migrate. This enables granular control over your migration scope.

Transformation and LOB Handling

Transformation rules can rename tables or columns, add prefix or suffix values, and remove columns during migration. LOB handling settings determine how large objects are processed. Choose limited or unlimited LOB modes depending on your performance requirements.

Task settings control parallel processing parameters, batch size, error handling, and validation rules. These settings verify data integrity after migration.

Full Load and CDC Configuration

Full load settings determine whether to migrate existing data before enabling CDC. CDC settings control how ongoing changes are captured and applied to the target database.

Validation compares row counts and data checksums between source and target. It ensures migration accuracy, though it adds processing overhead.

Testing and Validation Strategy

Pre-migration testing using sample data reduces risk before full-scale migrations. Post-migration validation includes application testing, performance benchmarking, and verifying business logic.

Common best practices include documenting all parameters, creating rollback plans, scheduling migrations during maintenance windows, and monitoring performance throughout the process.

Change Data Capture and Zero-Downtime Migration Strategy

How CDC Enables Zero-Downtime Migrations

Change Data Capture (CDC) enables zero-downtime migrations where applications continue running on source databases. DMS synchronizes changes to target databases in real time.

CDC works by monitoring the source database's transaction logs or journals. It identifies INSERT, UPDATE, and DELETE operations after initial full load completes. The replication instance applies these changes to the target database.

This approach allows applications to continue operating normally. The target database reaches currency with the source, minimizing business disruption.

Three-Phase Migration Process

The migration involves three distinct phases. Full load transfers all existing data to the target database using parallel processing. CDC captures and applies ongoing changes to keep the target synchronized.

Cutover is when applications switch to the target database. This occurs after validation confirms all changes were applied correctly.

Performance Optimization for CDC

CDC performance depends on the rate of changes being captured. It also depends on the target database's ability to apply them. Careful monitoring and optimization are required.

Validation steps after CDC completes ensure all changes applied correctly before cutover. Testing the cutover process beforehand in non-production environments is essential. Identify application compatibility issues and verify that all connections switch successfully.

Database-Specific CDC Requirements

Source database-specific CDC requirements vary significantly. MySQL requires binary logging enabled for CDC. Oracle uses archive logs or LogMiner. SQL Server uses transaction log backups.

Understanding these requirements prevents migration delays. It ensures successful implementation of zero-downtime strategies for your specific database platform.