Understanding Linear Regression Fundamentals

Linear regression is the foundation of regression analysis. It models the relationship between independent variables (features) and a dependent variable (target) using a linear equation.

Basic Linear Regression Formula

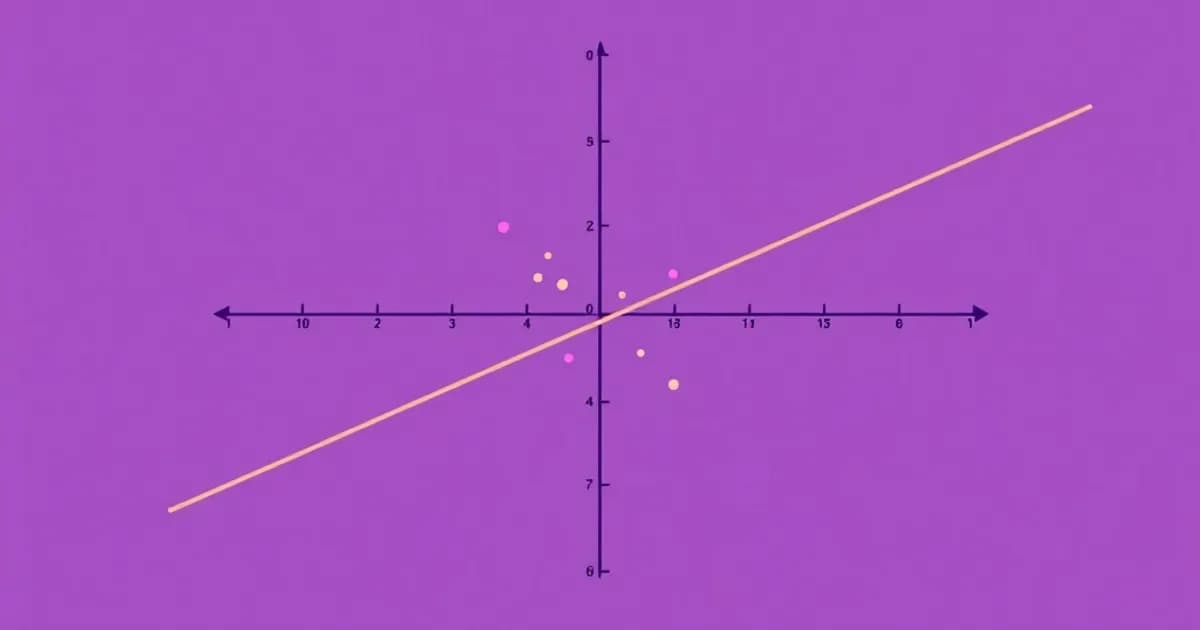

The basic form is y = mx + b, where y is the predicted value, m is the slope, x is the input feature, and b is the y-intercept. Simple linear regression involves one independent variable. Multiple linear regression uses several features to make predictions.

The goal is to find the best-fit line that minimizes the distance between predicted and actual values. This distance is typically measured by the sum of squared residuals. Ordinary least squares (OLS) estimation calculates optimal coefficients to achieve this.

Critical Assumptions for Valid Models

Linear regression relies on four key assumptions:

- Linearity: The relationship between variables is actually linear

- Independence: Observations are independent of each other

- Homoscedasticity: Errors have constant variance across all prediction ranges

- Normality: Errors follow a normal distribution

Violations of these assumptions lead to unreliable predictions and invalid statistical tests. Understanding residuals is crucial. Residuals are the differences between observed and predicted values.

Interpreting Residuals

A well-fit model shows randomly scattered residuals with no clear pattern. Systematic patterns in residuals suggest the model needs improvement. They also indicate that assumptions are violated. Always inspect residual plots to diagnose model problems before drawing conclusions.

Evaluating Regression Models with Key Metrics

Selecting the right evaluation metric is critical for assessing regression model performance. Different metrics reveal different aspects of how well your model generalizes.

Understanding R-Squared and Adjusted R-Squared

R-squared (R²) measures the proportion of variance in the dependent variable explained by the model. It ranges from 0 to 1, where 1 indicates perfect prediction. However, R² increases whenever you add features, even if those features don't genuinely improve predictions.

Adjusted R² penalizes adding unnecessary variables. It's more reliable for comparing models because it accounts for the number of features used.

Error Metrics for Practical Interpretation

Mean Squared Error (MSE) calculates the average of squared differences between predicted and actual values. It emphasizes larger errors more heavily. Root Mean Squared Error (RMSE) is the square root of MSE. It's expressed in the same units as the target variable, making it more interpretable.

Mean Absolute Error (MAE) takes the average absolute differences without squaring. It provides a straightforward measure of prediction accuracy without amplifying large errors.

Detecting Overfitting

Compare training and test set metrics to detect overfitting. A large gap suggests the model memorized training data rather than learning generalizable patterns. Cross-validation techniques like k-fold validation provide more robust performance estimates. They test on multiple data subsets, reducing the impact of random data splits.

Advanced Regression Techniques and Regularization

When simple linear regression produces overfitting or high model complexity, regularization techniques add constraints to coefficients. These improve generalization and prevent models from memorizing noise.

Ridge and Lasso Regression

Ridge regression adds an L2 penalty to the cost function. It shrinks coefficients toward zero without eliminating them entirely. Ridge is particularly useful when dealing with multicollinearity (correlated features).

Lasso regression uses an L1 penalty. It can force some coefficients to exactly zero. This effectively performs feature selection by removing less important variables. Lasso produces more interpretable models because irrelevant features disappear.

Elastic Net combines both L1 and L2 penalties. It offers flexibility between ridge and lasso approaches. Use it when you're uncertain which is better or want both feature selection and coefficient shrinkage.

Non-Linear Approaches

Polynomial regression extends linear regression by including polynomial features like x², x³. It captures non-linear relationships while remaining computationally tractable. However, higher-degree polynomials risk overfitting.

Support Vector Regression (SVR) uses kernel methods to handle non-linear relationships in high-dimensional spaces. Logistic regression, despite its name, is a classification technique that models probability of binary outcomes using a sigmoid function.

Hyperparameter Tuning

Regularization strength is controlled by hyperparameters like alpha (λ). You must tune this using cross-validation to find the optimal balance between bias and variance. Choosing between techniques depends on your data characteristics, the relationship between variables, and your priorities regarding interpretability versus accuracy.

Feature Engineering and Preprocessing for Regression

The quality of features directly impacts regression model performance. Feature engineering is a critical skill that separates good models from great ones.

Scaling and Encoding

Feature scaling is often essential because regression algorithms like ridge and lasso are sensitive to feature magnitude. Standardization transforms features to mean zero and unit variance using z-score normalization. Normalization scales features to a 0-1 range.

Categorical variables must be encoded into numerical form. Use one-hot encoding (creating binary columns for each category) for nominal data. Use ordinal encoding when categories have natural ordering.

Handling Missing Data and Outliers

Handling missing data is crucial. Options include deletion (removing rows with missing values), mean/median imputation, or advanced techniques like K-nearest neighbors imputation. Choose based on how much data is missing and whether missingness relates to other variables.

Outliers can significantly influence regression models, especially with OLS estimation. Detect them through visualization, statistical tests, or domain knowledge. Decide whether to remove, transform, or cap outliers based on whether they represent genuine values or errors.

Feature Selection and Interaction Features

Feature selection reduces dimensionality and improves interpretability by removing irrelevant or redundant variables. Methods include correlation analysis, recursive feature elimination, and regularization-based approaches.

Polynomial and interaction features can capture non-linear relationships and variable interactions. However, they increase dimensionality. Apply feature scaling, encoding, and selection to both training and test sets consistently. Many practitioners create preprocessing pipelines to ensure reproducibility and prevent data leakage where information from test sets influences training.

Practical Applications and Model Development Workflow

Regression analysis has broad real-world applications across industries. Understanding typical workflows helps you apply regression effectively in practice.

Common Industry Applications

In finance, regression predicts stock prices, credit defaults, and portfolio returns. Real estate uses regression to estimate property values based on location, size, and amenities. Healthcare applications include predicting patient outcomes, treatment effectiveness, and disease progression. Marketing teams use regression for demand forecasting, customer lifetime value prediction, and pricing optimization.

Step-by-Step Modeling Workflow

The typical regression modeling workflow follows these stages:

- Define the problem and collect relevant data

- Perform exploratory data analysis to understand distributions and correlations

- Handle missing values, outliers, and scale features appropriately

- Split data into training and test sets (typically 80-20 or 70-30 ratio)

- Try multiple regression approaches and compare performance metrics

- Tune hyperparameters like regularization strength using grid or random search

- Perform final evaluation on the held-out test set

- Create interpretable reports of findings and recommendations

Validation and Interpretation

Understanding assumptions like linearity and homoscedasticity helps diagnose when regression isn't appropriate. Residual analysis reveals whether assumptions are violated and guides model improvements. Always document your workflow, including data sources, preprocessing steps, and decision rationale. This ensures reproducibility and facilitates communication with stakeholders.