Core Concepts of Continuous Deployment

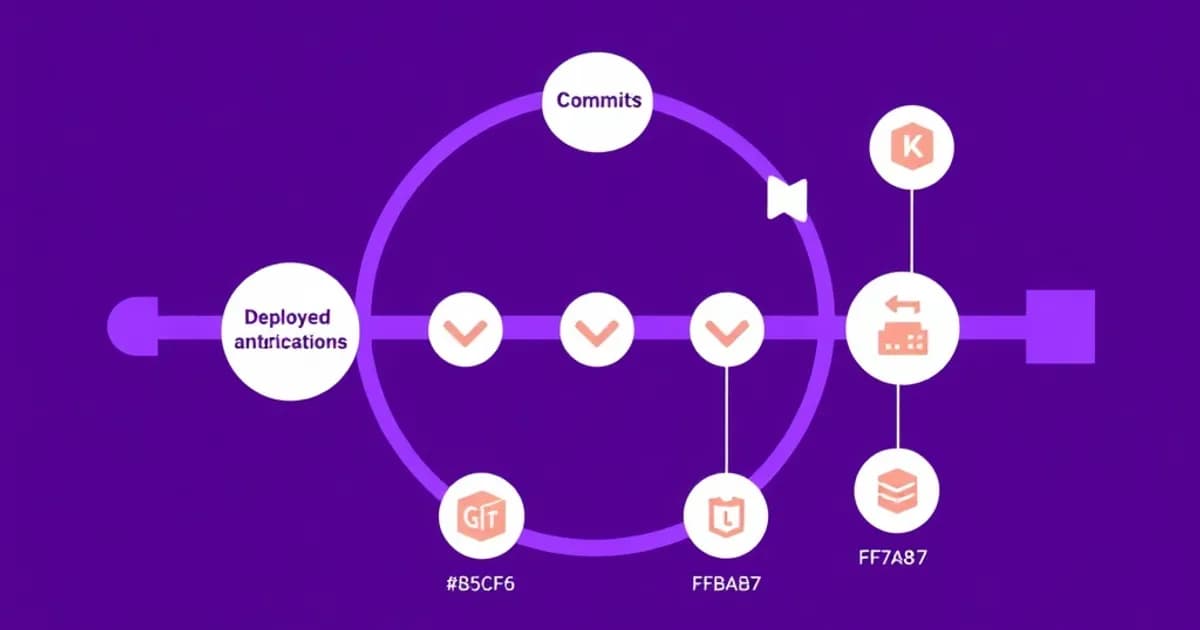

Continuous Deployment is the final CI/CD pipeline stage where code changes automatically move from testing to production without human intervention. The process starts when developers commit code to version control, triggering an automated pipeline that runs tests and security scans.

How the Deployment Pipeline Works

Once all automated tests pass, code deploys to production automatically. This differs from continuous delivery, where deployments need human approval. Key components include automated testing frameworks, deployment automation tools, and monitoring systems that track application health post-deployment.

Safe Deployment Techniques

The pipeline must include safety mechanisms to prevent widespread failures:

- Canary deployments release new versions to a small user subset first, then gradually increase

- Blue-green deployments maintain two identical production environments for zero-downtime updates

- Feature flags allow toggling features on or off without redeployment

Philosophy and Requirements

Organizations implementing CD must establish rigorous testing standards, maintain comprehensive observability, and create effective rollback procedures. The philosophy is simple: if testing and deployment are automated reliably enough, deploying multiple times daily becomes safe and beneficial rather than risky.

Essential DevOps Tools and Technologies

Modern continuous deployment relies on a sophisticated ecosystem of tools working together seamlessly. Version control systems like Git form the foundation, triggering pipelines when code is committed.

CI/CD Platforms and Orchestration

Orchestration platforms automate the entire pipeline from commit to production:

- Jenkins

- GitLab CI/CD

- GitHub Actions

- CircleCI

These tools define workflows using configuration files like Jenkinsfiles or YAML, specifying test execution and deployment steps.

Containerization and Infrastructure

Docker ensures applications run identically across environments. Kubernetes orchestrates containerized applications in production, handling deployment, scaling, and self-healing. Infrastructure-as-Code tools like Terraform and Ansible enable automated provisioning of cloud resources and reproducible server configuration.

Supporting Systems

Container registries like Docker Hub and Amazon ECR store built images. Monitoring platforms including Prometheus, ELK Stack, and Datadog provide real-time application performance insights. Artifact repositories like Artifactory manage dependencies throughout the pipeline. Security scanning tools detect vulnerabilities in code and dependencies integrated directly into the pipeline.

Understanding how these tools integrate is essential for building robust deployment pipelines that maintain reliability while deploying frequently.

Deployment Strategies and Safety Practices

Implementing continuous deployment safely requires understanding various strategies that minimize risk while enabling rapid releases. Each strategy addresses different organizational needs and risk tolerances.

Key Deployment Strategies

Canary deployments release new versions to a small percentage of users initially. You monitor behavior and performance metrics, then gradually increase the percentage until all users run the new version. This catches unexpected issues affecting only a fraction of users.

Blue-green deployments maintain two identical production environments called blue and green. One serves live traffic while the other stands ready. New versions deploy to the inactive environment, then traffic switches instantly. If problems occur, traffic switches back immediately.

Rolling deployments gradually replace old instances with new ones, maintaining service availability throughout. Feature flags decouple deployment from feature activation, enabling quick rollbacks without redeploying.

Critical Safety Mechanisms

Automated rollback is essential. Systems must automatically detect deployment failures through health checks and metrics, then revert to previous stable versions. Database migrations require special handling since they cannot be instantly rolled back. Maintain backward compatibility and use feature flags around schema changes.

Comprehensive monitoring before, during, and after deployments enables rapid issue detection. Post-deployment verification includes running smoke tests, monitoring error rates, and being prepared to rollback within minutes if problems appear.

Building and Maintaining Reliable CI/CD Pipelines

A successful continuous deployment pipeline requires careful design, implementation, and ongoing maintenance. Pipeline configuration should be version-controlled alongside application code, enabling code review of infrastructure changes.

Essential Pipeline Stages

Pipelines must have clear stages that execute independently:

- Source code checkout from version control

- Build and basic checks

- Unit testing and integration testing

- Security analysis

- Artifact creation (Docker images, binaries)

- Staging deployment

- Smoke testing

- Production deployment

- Post-deployment verification

Each stage should be independent and idempotent, producing consistent results regardless of execution history.

Quality and Performance

Test coverage is paramount. High coverage percentages alone do not guarantee quality, but thoughtful test design that catches real bugs is essential. Tests must run quickly, as slow pipelines discourage frequent commits and reduce feedback effectiveness. Use parallel execution to reduce overall time.

Pipeline Maintenance

Pipeline failures should be highly visible and addressed immediately rather than accumulating. Different environments must be configured identically to prevent environment-specific failures. Use secrets management tools like HashiCorp Vault to distinguish configuration from code. Artifact management ensures the exact same tested code reaches production without rebuilding.

Regular pipeline audits identify outdated dependencies, security vulnerabilities, and inefficient stages. Documentation explaining pipeline logic, deployment procedures, and troubleshooting guides helps teams maintain and improve the pipeline as requirements evolve.

Study Strategies and Flashcard Effectiveness

Mastering continuous deployment requires understanding both conceptual foundations and practical details, making flashcards an excellent study tool. Create flashcards organized by category: tools and commands, deployment strategy differences, pipeline stages, configuration syntax, and troubleshooting scenarios.

Creating Effective CD Flashcards

Effective flashcards include practical examples rather than vague definitions. Instead of asking "What is canary deployment?", ask "What percentage of traffic should canary deployments typically start with, and why?" This forces deeper understanding.

Include scenario-based cards like: "In a blue-green deployment, the green environment is failing health checks before traffic switch. What should happen?" Create cards covering common failure modes and recovery procedures. For tool-specific knowledge, reference actual configuration file syntax.

Study Techniques for Retention

Use spaced repetition intervals by reviewing cards frequently at first, then gradually increasing intervals as you master concepts. Group related cards to understand relationships between concepts. Create visual associations by including diagrams on cards.

Use active recall by covering answers before checking, forcing your brain to retrieve information. Test yourself on applying knowledge with scenario cards that require combining multiple concepts. Regular review is critical because DevOps tools evolve. Restudy materials quarterly to stay current with latest versions and features.