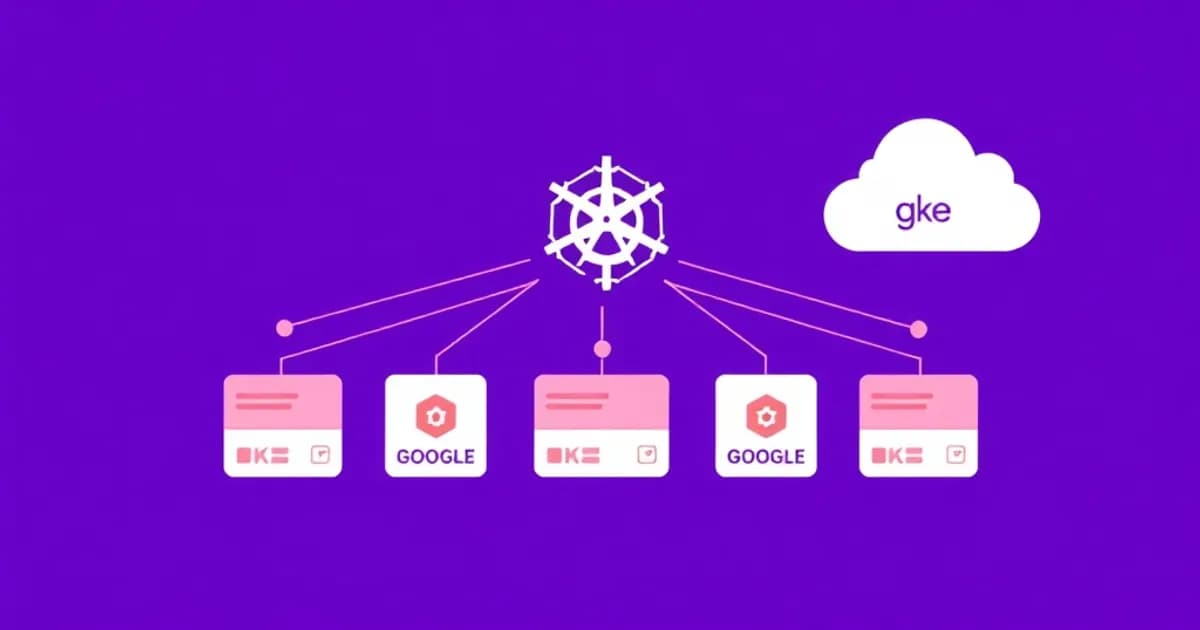

What is Google Kubernetes Engine (GKE)?

Google Kubernetes Engine (GKE) is a fully managed Kubernetes platform on Google Cloud. You get a production-ready environment without managing the control plane yourself.

How GKE Simplifies Kubernetes Operations

Managing Kubernetes manually requires maintaining the control plane, applying security patches, managing etcd backups, and handling API server uptime. GKE eliminates this burden. Google automatically manages cluster upgrades, security patches, and control plane redundancy across zones. You focus on deploying applications and configuring workloads.

Built on Open-Source Kubernetes

GKE runs Kubernetes, an open-source container orchestration platform originally developed by Google. It automates deployment, scaling, and operation of application containers across machine clusters.

Seamless Integration with Google Cloud Services

GKE integrates with Cloud Storage, Cloud SQL, Pub/Sub, and Cloud Monitoring. This makes it ideal if you're already using Google Cloud services. You get native connectivity without complex configuration.

Flexibility: Standard vs. Autopilot

GKE offers two modes. Standard clusters give you full control over nodes and configurations. Autopilot clusters let Google manage all infrastructure automatically based on your workload needs. Choose Standard for fine-grained control or Autopilot for minimal operational overhead.

Scales from Development to Production

GKE clusters scale from a few nodes to thousands. You pay only for the compute resources you use. This flexibility makes GKE suitable for development environments, testing, and mission-critical production workloads serving millions of users.

Core Kubernetes Concepts You Must Master

Understanding these foundational concepts is essential for working effectively with GKE. Each concept builds on the others to form a complete mental model.

Pods: The Smallest Deployable Unit

A Pod is the smallest deployable unit in Kubernetes. It typically contains one container, though it can contain multiple tightly-coupled containers sharing networking and storage. Pods are ephemeral, meaning they get created and destroyed dynamically. You rarely create Pods directly; instead, you use higher-level abstractions.

Deployments: Managing Multiple Pod Replicas

A Deployment manages a set of identical Pod replicas, ensuring a specified number are always running. Deployments handle rolling updates automatically, updating pods gradually without downtime. They're ideal for stateless applications like web servers and APIs.

Services: Stable Network Access

Services provide stable network endpoints for accessing pods. They abstract the underlying pod IPs that change frequently. Several types exist:

- ClusterIP (default) - accessible only within the cluster

- NodePort - exposes the service on a port across all nodes

- LoadBalancer - creates an external load balancer

- Ingress - manages external HTTP/HTTPS routes

Namespaces: Logical Isolation

Namespaces provide logical isolation within a cluster. Multiple teams or applications can share resources while maintaining separation. This prevents accidental interference between projects.

ConfigMaps and Secrets: Configuration Storage

ConfigMaps store non-sensitive configuration data. Secrets store sensitive information like passwords and API keys. Pods reference these as environment variables or mounted volumes. Never hard-code configuration into container images.

StatefulSets: For Stateful Applications

StatefulSets manage applications requiring stable network identities and persistent storage, such as databases. Unlike Deployments, StatefulSets maintain ordered, stable identities for each pod.

DaemonSets and Labels

DaemonSets ensure a pod runs on every node, useful for logging agents or monitoring tools. Labels and selectors enable organization and querying of Kubernetes objects. They form the foundation for how components discover each other.

GKE Cluster Architecture and Management

A GKE cluster consists of a control plane (managed by Google) and worker nodes (running your containers). Understanding this architecture helps you troubleshoot and optimize your cluster.

The Control Plane: Cluster Management

The control plane handles cluster management tasks. It includes the Kubernetes API server, etcd database for state storage, scheduler for pod placement, and controllers that maintain desired state. In GKE, Google manages the entire control plane. You never access it directly, which simplifies operations and ensures high availability across zones.

Worker Nodes and Node Pools

Worker nodes are Compute Engine instances running the kubelet (node agent) and container runtime. You organize nodes into node pools, which are groups with identical configurations. This allows different workloads to run on optimized hardware. For example, use CPU-optimized nodes for compute jobs and memory-optimized nodes for data processing.

Cluster Autoscaling: Automatic Node Management

GKE can automatically add or remove nodes based on demand. When pods need more resources than available, the cluster autoscaler adds nodes. When utilization drops, it removes unused nodes. This optimizes costs and ensures applications always have required resources.

Pod Autoscaling: Automatic Replica Adjustment

Horizontal Pod Autoscaling (HPA) adjusts replica counts based on metrics like CPU usage. If average CPU exceeds your target, HPA creates more pods. If below target, it removes pods. Vertical Pod Autoscaling (VPA) recommends or automatically adjusts resource requests and limits for containers.

Networking: VPC and Pod Communication

GKE uses Google Cloud VPC (Virtual Private Cloud) for networking. Pods automatically receive IP addresses from a pod CIDR range. Services abstract the underlying pod networking complexity. GKE offers standard routing and advanced options for specialized networking needs.

Built-In Security Features

GKE includes network policies, RBAC (Role-Based Access Control), workload identity for pod-to-GCP-service authentication, and pod security policies. These enforce security standards across your cluster without additional tools.

Deploying Applications and Managing Workloads on GKE

Deploying applications on GKE means writing Kubernetes manifests that define your desired infrastructure state. GKE then ensures your cluster matches that state.

Creating Your First Deployment

Start with a container image stored in Google Container Registry (GCR) or Artifact Registry. Write a Deployment manifest in YAML specifying the container image, resource requests and limits, environment variables, and replica count. Run kubectl apply to create the resources. GKE automatically pulls the image, creates pods, and ensures replicas are running.

Rolling Updates: Zero-Downtime Deployments

Rolling updates gradually replace old pods with new ones, maintaining service availability. Configure maxSurge (extra pods during update) and maxUnavailable (pods that can be offline) parameters. This ensures users experience no downtime during application updates.

Advanced Deployment Patterns

Canary deployments gradually roll out new versions to a percentage of traffic. You verify changes before full rollout. Blue-green deployments maintain two identical production environments. Switch traffic between them for instant rollback if issues occur.

Storage: Persistent Data Beyond Pod Lifecycles

Persistent Volumes (PV) and Persistent Volume Claims (PVC) provide storage persisting beyond pod lifecycles. For many applications, Google Cloud Storage offers persistent data needs. StatefulSets manage stateful applications requiring ordered deployment and stable identities.

Configuration Management Best Practices

Use ConfigMaps for non-sensitive data and Secrets for passwords and API keys. Never embed configuration in container images. This approach enables the same image across development, staging, and production environments.

Batch and Scheduled Work

Kubernetes Jobs handle batch processing tasks. CronJobs schedule tasks to run at specific times. Service mesh technologies like Istio can be installed on GKE for advanced traffic management, observability, and security policies across microservices.

GKE Monitoring, Logging, and Troubleshooting

Observability is critical for maintaining healthy GKE clusters in production. The right monitoring setup prevents most operational issues and enables quick incident response.

Monitoring Cluster Health

Google Cloud Monitoring (Stackdriver Monitoring) automatically collects metrics from your GKE cluster. It tracks node CPU and memory usage, pod-level metrics, and custom application metrics. Create dashboards to visualize cluster health and set up alerts when metrics exceed thresholds. Deploying Prometheus alongside GKE provides even more detailed metrics and longer retention.

Logging Application and System Activity

Cloud Logging (Stackdriver Logging) captures cluster events, kubelet logs, application container logs, and control plane logs. Logs are automatically collected and searchable through the Cloud Console. Centralized logging enables quick troubleshooting across your entire cluster.

Essential kubectl Troubleshooting Commands

These commands are critical for diagnoshooting issues:

- kubectl get pods shows pod status and current replicas

- kubectl describe pod reveals detailed information and events

- kubectl logs displays container output for debugging

- kubectl exec allows running commands inside containers

Diagnosing Common Pod Issues

ImagePullBackOff indicates container image problems. Check image names and registry access. CrashLoopBackOff means the application crashes repeatedly. Review logs and application configuration. Pending pods suggest insufficient cluster resources. Check resource requests against node capacity.

Network and Storage Troubleshooting

Network connectivity issues often involve network policies. Review policies and test DNS resolution with kubectl exec. RBAC issues prevent users from accessing resources. Review role bindings with kubectl get rolebindings. Storage problems usually involve PVC binding. Verify storage classes and available storage capacity.

Production Readiness

The GKE Best Practices guide and official documentation provide detailed guidance on production readiness, cost optimization, and security hardening. Setting up proper monitoring from day one prevents most operational issues and enables quick incident response.