Multi-AZ and Multi-Region Deployments

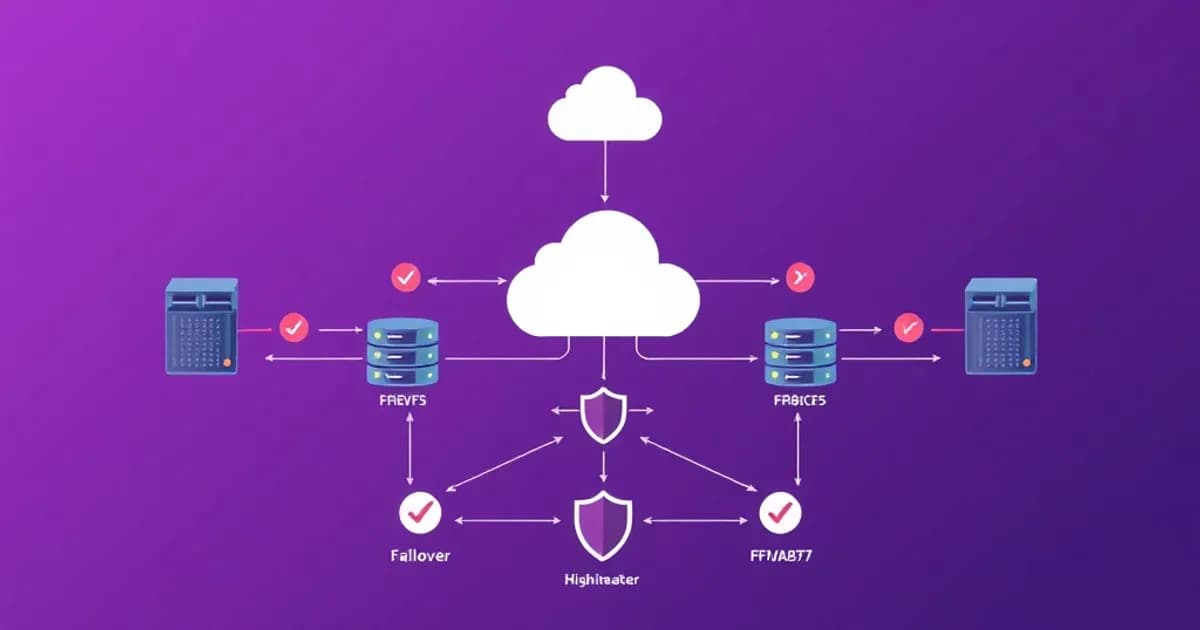

Multi-Availability Zone (Multi-AZ) deployments are foundational to AWS high availability. An Availability Zone is a physically separate data center within an AWS region. Deploying applications across multiple AZs ensures your application keeps running if one AZ fails.

For databases, Multi-AZ provides synchronous replication between a primary and standby instance. Automatic failover occurs in 1-2 minutes. RDS, ElastiCache, and other managed services support this natively.

Multi-Region Architecture

Multi-region deployments distribute your application across geographically distinct AWS regions. This protects against regional-level failures and enables disaster recovery with Recovery Time Objectives (RTO) measured in minutes.

Active-active multi-region architectures maintain consistent data across regions. Services like DynamoDB Global Tables and Aurora Global Database handle this automatically.

Active-passive setups use Route 53 health checks to failover when the primary region becomes unhealthy. You manage the switchover process or automate it with Route 53 routing policies.

RTO and RPO Concepts

Understanding RTO (how quickly you recover) and RPO (how much data loss is acceptable) is critical for the exam. Multi-region deployments typically achieve better RTO and RPO than single-region solutions.

Multi-AZ uses synchronous replication for strong consistency. Multi-region often uses asynchronous replication due to geographic distance. Multi-region introduces higher cost and complexity but provides greater resilience.

Load Balancing and Elastic Load Balancing Services

Elastic Load Balancing (ELB) distributes incoming traffic across multiple targets. This prevents overload on any single instance and improves fault tolerance.

AWS provides three load balancer types optimized for different scenarios. Choose based on your protocol requirements and performance needs.

The Three Load Balancer Types

-

Application Load Balancer (ALB) operates at Layer 7 (application layer). It's ideal for HTTP/HTTPS traffic and supports host-based and path-based routing. Route different requests to different target groups based on hostnames or URL paths.

-

Network Load Balancer (NLB) operates at Layer 4 (transport layer). It handles millions of requests per second with ultra-high performance. Use it for extreme performance needs and non-HTTP protocols like TCP and UDP.

-

Classic Load Balancer (CLB) is the legacy option. It supports both Layer 4 and Layer 7 but lacks advanced routing. It's generally superseded by ALB and NLB for new deployments.

Health Checks and Target Management

Load balancers automatically distribute across multiple AZs for high availability. Health checks verify that targets are healthy by sending periodic requests. Unhealthy targets are automatically removed from the pool and re-added when they recover.

For high availability, configure multiple target groups across different AZs. Cross-zone load balancing distributes traffic evenly across all targets in all enabled AZs, improving fault tolerance.

Connection Management

Sticky sessions route requests from the same client to the same target. This matters for session-based applications. Connection draining gracefully closes existing connections before removing an instance from the load balancer, preventing abrupt disconnections.

Auto Scaling and Capacity Management

Auto Scaling automatically adjusts the number of EC2 instances to match demand. It maintains high availability while optimizing costs.

An Auto Scaling Group (ASG) contains a collection of EC2 instances and defines scaling policies. You specify minimum, maximum, and desired capacity. If an instance fails, Auto Scaling automatically replaces it with a new instance.

Scaling Policies

Target tracking policies automatically scale to maintain a target metric value. For example, keep average CPU utilization at 50%.

Step scaling policies define specific actions when metrics cross thresholds. This offers more granular control than target tracking.

Scheduled scaling adjusts capacity based on predictable demand patterns. Increase capacity before business hours or before known traffic spikes.

Lifecycle Hooks and Load Balancer Integration

Lifecycle hooks enable you to perform custom actions during scaling events. For example, gracefully deregister instances from a load balancer before termination.

Instances in an ASG are automatically registered with a load balancer's target group. This distributes incoming traffic across new and existing instances seamlessly.

High Availability Configuration

For high availability, configure ASGs to span multiple AZs. Use a minimum capacity greater than 1 to ensure instances continue serving traffic if one AZ fails.

Combined with health checks, Auto Scaling provides self-healing capabilities. It automatically recovers from both instance failures and application crashes.

Designing Highly Available Databases and Caching

Database high availability requires careful selection of services and configuration. Different services provide different consistency models and recovery capabilities.

RDS and Aurora

Amazon RDS provides Multi-AZ deployments where the primary database synchronously replicates to a standby instance in a different AZ. If the primary fails, RDS automatically promotes the standby in 1-2 minutes with no manual intervention.

Read replicas extend high availability by creating read-only copies of the database. They distribute query load and improve performance. Read replicas can be asynchronous and located in different regions.

Aurora is a relational database engine designed for high availability. It automatically replicates data across three AZs with no additional configuration. Aurora also supports read replicas in the same region and cross-region replicas for disaster recovery.

NoSQL and Caching

DynamoDB is a NoSQL database that by default provides high availability across multiple AZs and partitions. DynamoDB Global Tables synchronously replicate data across multiple regions, enabling active-active architectures.

Amazon ElastiCache provides managed Redis and Memcached for caching. Both support Multi-AZ for high availability. Redis with cluster mode enabled distributes data across multiple shards for scalability and fault tolerance.

Backup and Recovery Strategies

RDS automated backups retain 7 days of transaction logs by default. This enables point-in-time recovery to any moment within that window.

Aurora stores data in S3 automatically and maintains longer retention periods than RDS.

DynamoDB point-in-time recovery allows you to restore to any point in the last 35 days.

Choose the right database service based on consistency requirements, performance needs, and RTO/RPO targets.

Monitoring, Health Checks, and Failover Mechanisms

Effective high availability requires robust monitoring and health checking across multiple layers. Each layer detects failures and contributes to overall resilience.

CloudWatch and Alarms

CloudWatch monitors AWS resources and collects metrics like CPU utilization and network throughput. Alarms trigger when metrics cross thresholds, enabling automated responses through SNS, Lambda, or Auto Scaling.

CloudWatch synthetic monitoring creates scheduled tests of your application endpoints. This proactively detects issues before affecting real users.

Route 53 Health Checks

Route 53 health checks monitor the health of endpoints by sending periodic requests and analyzing responses. Health checks support HTTP, HTTPS, TCP, and calculated checks that combine multiple checks with logic.

When Route 53 detects an unhealthy endpoint, it stops routing traffic to that endpoint. This implements DNS-based failover automatically.

Load Balancer and Database Failover

Application Load Balancers include built-in health checks that determine which targets receive traffic. Connection draining gracefully closes existing connections before removing unhealthy instances.

RDS Multi-AZ and Aurora failover replicas provide automatic detection and promotion when primary instances fail. The multi-layer approach ensures quick failure detection and rerouting.

Integration and MTTR

Lambda and SNS integrate with health checks and alarms to trigger custom failover logic. Mean Time To Recovery (MTTR) measures how quickly your system recovers from failures.

Automated health checks and failover mechanisms minimize MTTR by eliminating manual intervention. For the exam, know which services support health checks, what metrics are monitored, and how failures trigger failover at each layer.