Core Scheduler Architecture and Components

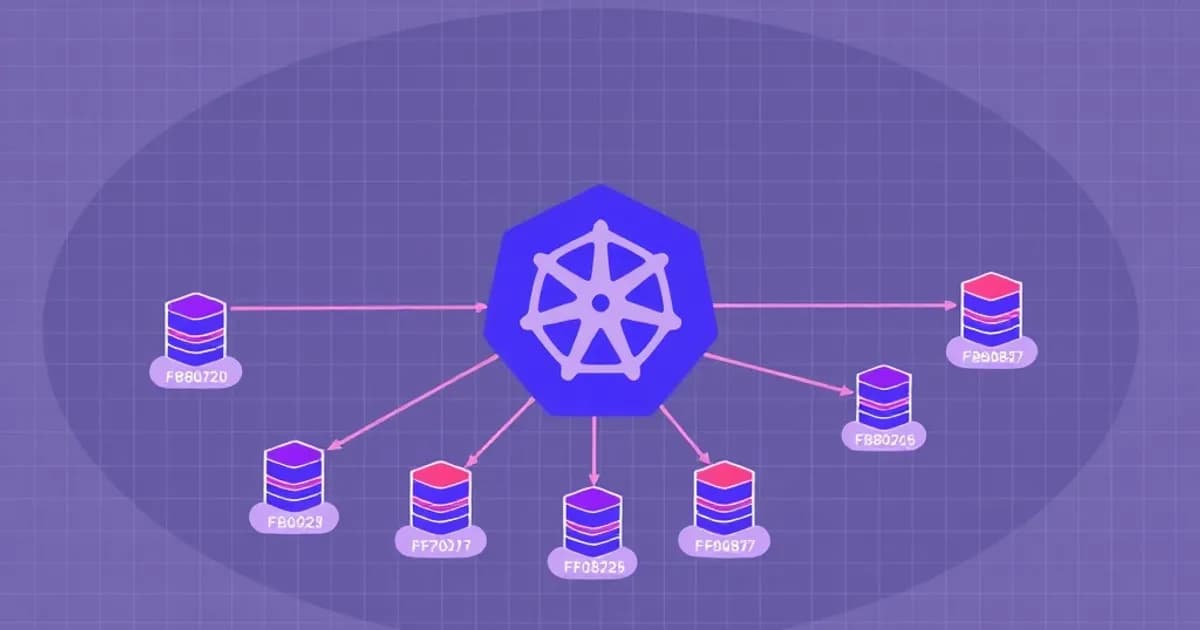

The Kubernetes scheduler is the master component that watches for newly created pods without assigned nodes. It continuously evaluates all available nodes and assigns pods to the most suitable one based on predefined criteria.

How the Scheduler Works

The scheduler runs as a separate component in the control plane and monitors the API server for unscheduled pods. When it finds a pod without a nodeBinding, it evaluates all nodes and assigns the pod based on its requirements.

The scheduling process involves three main phases:

- Filtering: Eliminates nodes that don't meet pod requirements (insufficient CPU or memory)

- Scoring: Ranks remaining nodes based on resource utilization, node affinity preferences, and custom rules

- Binding: Commits the decision by writing the pod-to-node assignment to etcd

Key Interactions with Other Components

The scheduler works closely with the kubelet and controller manager to orchestrate pod placement. The scheduler makes placement decisions, while the kubelet on each node pulls container images and creates containers.

Resource requests and limits directly influence scheduling decisions. The scheduler uses these values to determine which nodes have sufficient capacity for a pod.

What to Study for CKA

Practice explaining the complete scheduling workflow from pod creation through container startup. Understand how the scheduler responds to node failures and resource constraints. Be able to predict scheduling outcomes given specific pod requirements and node configurations.

Node Affinity, Pod Affinity, and Anti-Affinity Rules

Affinity rules constrain which nodes your pod can run on using labels and topology. These rules come in two forms: hard requirements that prevent scheduling and soft preferences that increase node priority.

Node Affinity Types

Node affinity bases rules on node labels. Two types exist:

- requiredDuringSchedulingIgnoredDuringExecution: Hard requirement. The pod remains unscheduled if no node matches.

- preferredDuringSchedulingIgnoredDuringExecution: Soft preference. The scheduler increases the priority of matching nodes but will schedule the pod elsewhere if necessary.

Use hard affinity when you need strict constraints, like scheduling only on nodes with specific hardware. Use soft affinity when you prefer certain node types but can accept alternatives.

Pod Affinity and Anti-Affinity

Pod affinity bases rules on other pod labels rather than node labels. This is useful when you want pods to run together on the same node.

Pod anti-affinity keeps pods separated across nodes or zones, critical for high availability. The topology key determines the scope, such as kubernetes.io/hostname for per-node scope or topology.kubernetes.io/zone for zone-level scope.

CKA Exam Focus

You must write affinity specifications from memory and predict scheduling outcomes. Common exam questions test why pods remain unscheduled when affinity requirements cannot be met. Understand how affinity rules interact with other scheduling constraints and how to use them for resilient systems.

Taints and Tolerations for Advanced Pod Placement

Taints and tolerations work together to exclude pods from nodes unless they explicitly tolerate the taints. A taint has three components: key, value, and effect.

Taint Effects

The effect determines what happens to pods that don't tolerate the taint:

- NoSchedule: Prevents new pod scheduling. Running pods are not affected.

- NoExecute: Prevents scheduling and evicts running pods that don't tolerate the taint.

- PreferNoSchedule: Soft constraint. The scheduler prefers to avoid the node but will schedule there if necessary.

How Tolerations Work

Tolerations in pod specifications allow pods to be scheduled on nodes with matching taints. A pod tolerates a taint if it has a toleration with matching key and value.

For example, a node tainted with key=gpu, value=nvidia, effect=NoSchedule reserves it for GPU workloads. Only pods with matching tolerations can be scheduled there.

With NoExecute, you can specify tolerationSeconds to allow a grace period before eviction. Tolerating pods are evicted after this time if the taint remains.

CKA Exam Preparation

Understand the difference between NoExecute and NoSchedule effects, especially regarding existing pods. Practice multi-step taint and toleration configurations. Debug scenarios where pods remain unscheduled despite having tolerations by checking taint-toleration matches.

Eviction, Preemption, and Priority Classes

Kubernetes includes mechanisms to handle resource scarcity and ensure critical workloads survive. Pod eviction occurs when a node runs out of resources, triggering the kubelet to remove pods to reclaim space.

Quality of Service Classes

Eviction policies depend on QoS classes, which are determined by resource requests and limits:

- Guaranteed: Requests equal limits. These pods are evicted last.

- Burstable: Requests less than limits. These pods are evicted second.

- Best Effort: No requests or limits. These pods are evicted first.

Pods within the same QoS class are evicted based on resource usage relative to their requests.

Priority and Preemption

Pod priority is defined using PriorityClass objects, which assign integer priority values. When the scheduler cannot find a node for a pod, it may preempt lower-priority pods if the new pod has higher priority.

Preemption is not guaranteed and depends on node topology and available resources. Understanding when preemption occurs is critical for exam scenarios.

CKA Exam Focus

Understand how QoS classes influence eviction order and the complete flow from resource shortage to pod eviction. Practice scenarios with mixed-priority workloads and predict which pods are evicted first. Know the difference between hard eviction thresholds that trigger immediate action and soft thresholds with grace periods. Be able to create PriorityClass objects and assign priorities in pod specifications.

Custom Schedulers and Scheduling Plugins

Beyond the default scheduler, Kubernetes allows custom schedulers and plugins for specialized workload requirements. Multiple schedulers can coexist in a cluster, each handling different pod classes.

Custom Schedulers

Custom schedulers are useful when default scheduling logic doesn't meet your needs, such as for ML workloads requiring specific hardware. You deploy multiple schedulers by specifying the schedulerName field in pod specifications.

Custom schedulers are appropriate for complex resource requirements or domain-specific constraints. However, they add operational complexity and can cause conflicts if multiple schedulers interfere.

Scheduling Framework and Plugins

The Scheduling Framework, available in Kubernetes 1.16 and later, provides a plugin architecture. You can extend the default scheduler without forking it.

Framework plugins hook into specific extension points in the scheduling cycle:

- PreFilter, Filter, PostFilter (filtering phase)

- PreScore, Score, NormalizeScore (scoring phase)

- Reserve, Permit, PreBind, Bind, PostBind, Unreserve (binding and cleanup)

Each extension point allows plugins to add custom logic for filtering nodes, scoring candidates, or performing actions after decisions.

CKA Exam Preparation

Understand when custom schedulers are appropriate and how to deploy them. Explain how multiple schedulers coexist without conflicting. While deep plugin development is beyond CKA scope, understand the framework's purpose and extension points. Know that the scheduler framework enables sophisticated enterprise strategies while maintaining standard Kubernetes compatibility.